In the previous newsletter, I made the case that learning analytics fails not because of a lack of data or technology, but because of a lack of clarity about purpose. Analytics exists to support decisions. Not to report activity. Not to fill dashboards. But to help the people who matter most in your organization make better choices about learning, skills, and performance.

That argument tends to land well. Most L&D professionals I share it with nod immediately. The logic is hard to argue with.

And then comes the follow-up question, which is usually something like: "Fine. But where do we even start?"

This newsletter is my answer to that question. And it begins with something that tends to surprise people.

You almost certainly already have more relevant data than you think.

The assumption that holds us back

There is a persistent belief in L&D that the reason analytics is not working is that the data is not there yet. That before anything useful can happen, new systems need to be implemented, integrations need to be built, and data infrastructure needs to mature. Analytics becomes something that will happen properly in the future, once the conditions are right.

I understand where this belief comes from. Data in most organizations is genuinely messy. It lives in silos. It is inconsistently defined. It is hard to extract and harder to connect. All of that is real.

But the conclusion that nothing useful can be done until everything is clean and connected is wrong. And acting on that conclusion causes organizations to delay some of the most valuable analytical work they could be doing — work that does not require perfect infrastructure, just clearer thinking.

The more honest starting point is this: most organizations are already generating an enormous amount of data that is relevant to the decisions L&D, HR and business leadership need to make. The problem is not that the data does not exist. The problem is that nobody has mapped it to the decisions that matter.

That mapping exercise, what I think of as a bottom-up data inventory, is where this newsletter begins.

Starting from the bottom: what data do you actually have?

A bottom-up data inventory is exactly what it sounds like. Rather than starting with an ambitious vision of what your analytics ecosystem should eventually look like, you start with the humbler and more practical question: what data exists right now, and where does it live?

In most organizations, the answer spans a much wider territory than people initially assume. Learning management systems hold completion records, assessment scores, enrollment patterns, and time stamps. HR systems hold role data, tenure, mobility history, and performance review outcomes. Operational systems hold the business metrics that ultimately reflect whether learning is working: sales figures, quality scores, service resolution times, error rates, customer satisfaction data. Collaboration tools hold signals about how people work, communicate, and seek help. Even calendar and meeting data can reveal patterns about how time is being spent and where friction exists in the organization.

None of this data was designed with learning analytics in mind. Much of it is imperfect. Some of it is difficult to access. But it exists. And when you begin to catalogue it systematically, source by source, field by field, with an honest assessment of quality and accessibility, something useful starts to emerge.

You begin to see what is actually available, not just what you wish you had. And that is the foundation on which real analytical work can be built.

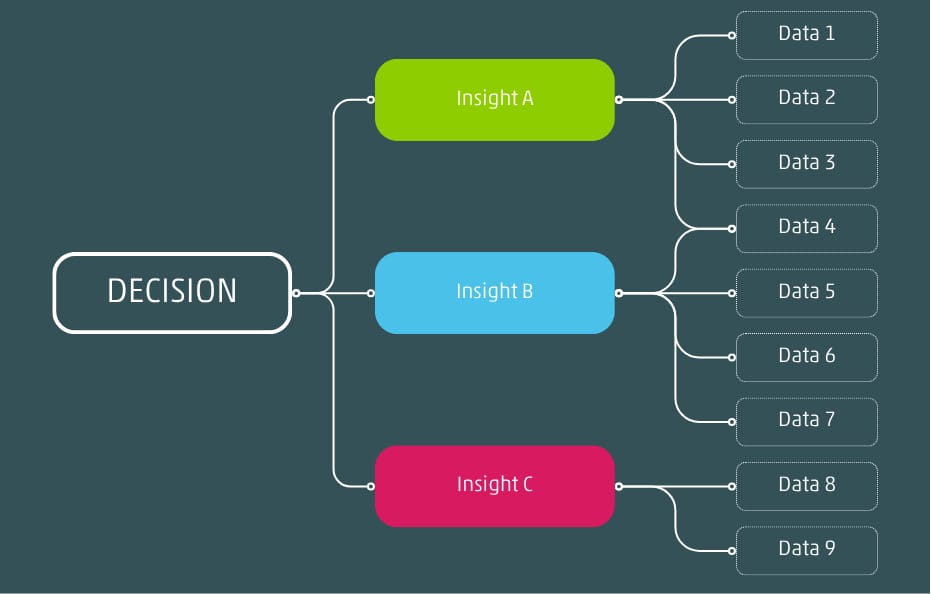

Decisions require insights and insights require data

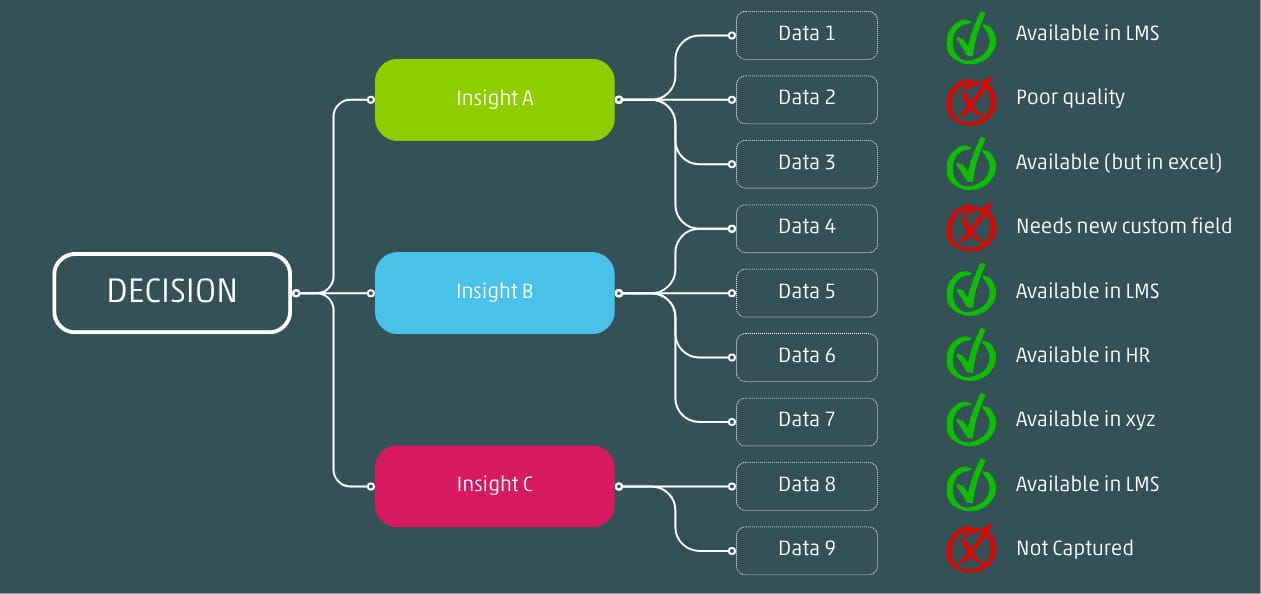

At SLT Consulting, this is one of the first things we do when working with a new organization. Before any tool is selected, before any dashboard is designed, we sit down and map the data landscape as it actually exists. What systems are in place. What they capture. How reliable that data is. How accessible it is in practice. And critically, what is missing. That last question turns out to be just as important as the inventory itself, because the gaps in the data are often as revealing as what is there.

Starting from the top: what decisions actually matter?

The bottom-up inventory tells you what data exists. But data without purpose is just information. To make the inventory useful, you need to run a parallel exercise from the top down: a structured conversation about the decisions that matter most to L&D and HR leadership, and to the broader organization.

This conversation is harder than it sounds, for a specific reason. Most leadership teams, when asked what decisions they need to make, default to the language of goals and priorities rather than decisions. They talk about "improving retention" or "accelerating skills development" or "increasing the impact of learning." These are outcomes, not decisions. And outcomes alone, however worthy, are not sufficient to tell you what data you need.

A decision is different. A decision is a specific choice between alternatives, made by a specific person or team, at a specific point in time, with specific consequences. "Should we invest next year's learning budget in leadership development or technical upskilling?" is a decision. "Which roles have the longest time to proficiency, and how can we shorten this?" leads to a decision. "Are we better off building this capability internally or partnering externally?" is a decision.

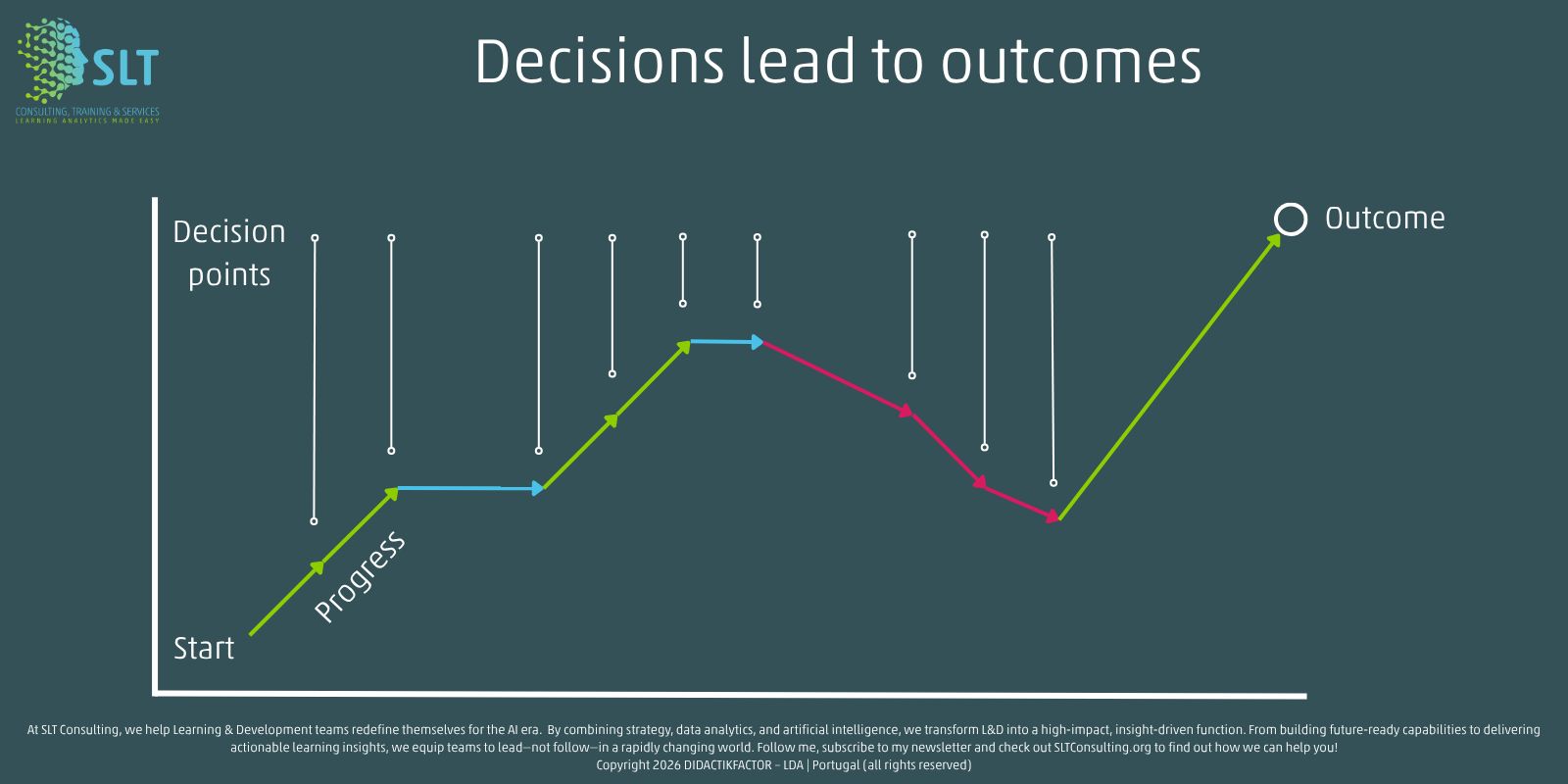

A specific outcome is always the result of a series of conscious and unconscious decisions

When you articulate decisions at this level of specificity, the data requirements become much clearer. You can look at your bottom-up inventory and ask, for each decision: do we have what we need to inform this choice? If yes, how good is the data? If no, what is missing?

That question “what is missing” is where the two exercises connect. And it is where the most important analytical work actually begins.

The gap is more manageable than it looks

When organizations first do this mapping exercise, the gap between the data they have and the data they need can look discouraging. There are almost always decisions that matter but cannot yet be properly informed. There are almost always data sources that exist in principle but are too fragmented, too inconsistent, or too inaccessible to be useful in practice.

But a few things tend to become clear quite quickly that make the gap feel more manageable.

DATA QUALITY: First, not all decisions require perfect data. Many of the most important choices L&D and HR leaders make can be meaningfully improved by data that is imperfect but directionally reliable. The question is not whether the data is clean enough to publish in a research paper. The question is whether it is good enough to shift a decision in a better direction than gut feel alone would produce. That bar is often lower than people assume.

For example: If you want to decide if you should lower the burden on employees in time spent on mandatory training, you need data on how long programs take to complete. This data is often of questionable quality; sometimes it is missing, sometimes it is ’zero’, and in other cases it is inaccurate: too high or too low. With clear assumptions on training durations, or statistical ‘tricks’ like taking averages or removing outliers, you can still perform a very decent investigation while (I hope) cleaning your data at the same time.

EASY-TO-FILL-GAPS: Second, not all gaps are equal. Some missing data points are genuinely difficult to obtain and may require significant investment to address. Others are surprisingly accessible once someone decides to look. Prioritizing the gaps that matter most for the decisions that matter most is a much more tractable problem than trying to fix all data quality issues simultaneously.

For example: In L&D we have the tendency to use program titles and descriptions to hide other data points like target audience, proficiency level, language to name a few. Great idea, but almost entirely useless for analytics. Most learning platforms however allow you to create custom course fields that enable you to put that extra information in specific fields so it can be used for analytics. A simple solutions to make more data available.

While not all data might be available, the use of assumptions and proxy data could still generate useful insights to support decisions.

DATA DICTIONARY: Third, the inventory itself creates value before any analysis has been done. Such an inventory can be documented in what we refer to as a data dictionary. And simply knowing what data exists, where it lives, and how reliable it is gives L&D a clearer picture of its analytical capability than most teams have ever had. It makes conversations with IT, HR, and business leadership more specific and more productive. And it reveals opportunities that would otherwise remain invisible.

A practical illustration

Let me make this concrete with an example.

Imagine an L&D leadership team that wants to get better at answering a question their business partners keep asking: how long does it take someone to become truly productive in a new role? This is a decision-relevant question. The answer affects hiring decisions, onboarding investment, internal mobility choices, and budget allocation for development.

A bottom-up inventory might reveal that the organization already holds HR data on start dates and role transitions, manager assessment data from ninety-day reviews, operational data on output quality and error rates for certain roles, and LMS data on what learning was completed during the onboarding period and when.

None of these data sources was designed to answer the time-to-productivity question. But combined and connected, they begin to tell a story. You can start to see patterns: which roles take longest to reach consistent performance, which onboarding interventions are associated with faster ramp-up, which manager behaviors correlate with quicker productivity, and where the biggest variation exists across teams and locations.

This is not a sophisticated analytics project. It does not require a new platform. It requires connecting data that already exists, around a decision that already matters, using a question that was always worth asking.

That is decision intelligence in practice. And it is available to most organizations right now, with the data they already have.

What you can do this week

You do not need a project team or a consulting engagement to begin this work. You can start with two conversations.

The first is internal to L&D. Gather the people who know your data landscape and ask: what systems do we use, what do they capture, and how reliable is that data in practice? Be honest. The goal is an accurate picture, not an optimistic one. Write it down. You will be surprised both by how much exists and by how much you assumed existed but cannot actually access cleanly.

The second conversation is with one business or HR leader whose decisions genuinely matter. Not a stakeholder management conversation. A real conversation about the choices they are currently making without enough information, and what data driven insights would actually help them. Listen carefully. The decisions they describe will tell you more about where to focus your analytics investment than any framework or industry benchmark ever could.

These two conversations, taken together, are the beginning of a data strategy that is grounded in reality rather than aspiration. They will not solve everything. But they will show you exactly where to start.

What comes next

In most organizations, this mapping exercise will surface gaps. Data that is needed but does not yet exist in a usable form. Decisions that matter but cannot yet be properly informed. That is not a failure of the exercise. It is the point of it.

The third newsletter in this series addresses those gaps directly, through a concept I find both practical and underused: designing for data. The idea that the technology you choose, the processes you design, the learning programs you build, and even the roles you create should all be shaped, in part, by the data you want them to generate.

Closing a data gap is not primarily a matter of finding a new tool. It is a matter of designing the conditions that make the right data possible in the first place.

That is where we go next.

Peter Meerman SLT Consulting — Learning Analytics Made Easy